Why "Vibecoding" Only Gets You So Far

How the open-source community taught me the math behind AgX after I let AI hallucinate my color matrices.

I like to move fast. For RapidRAW, momentum is everything. But recently, that speed caught up with me in a public, colorful way.

In the v1.4.4 update to RapidRAW, I introduced the AgX tonemapper. If you follow rendering or photography communities, you know AgX is a great new tone mapper. Adopted by Blender and darktable, it solves the infamous "plastic" look that happens when bright digital colors clip to white. Instead of harsh color shifts, AgX gracefully desaturates highlights, mimicking how real film responds to light.

Users kept asking for it. I wanted to ship it. There was just one problem: my knowledge of advanced color science and matrix transformations was zero.

So, I did what I usually do when entering unknown territory. I used AI. And that’s where the trouble started.

The "Vibecoding" Trap

"Vibecoding" is a term that’s been floating around lately. It’s when you use LLMs to write code for concepts you don’t entirely understand, tweaking things based on "vibes" until the output looks roughly correct.

I asked an LLM to explain AgX to me, summarize the mathematical curves, and generate the WGSL shader code. The AI gave me the beautiful sigmoid curve logic, and it also handed me a couple of mat3x3 matrices to handle the color space conversions between sRGB, the internal AgX working space, and the display output.

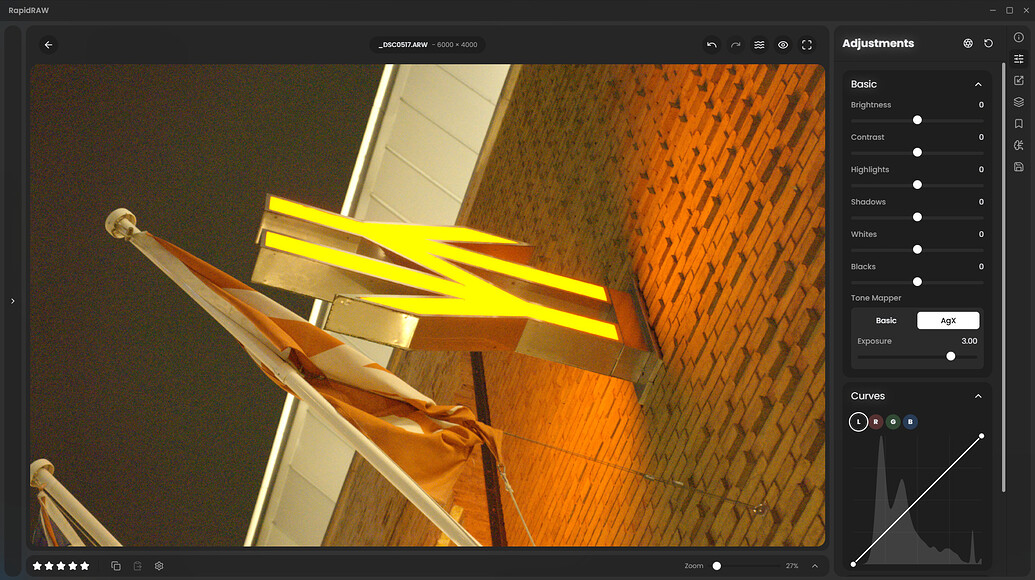

I plugged it in. It compiled. I dragged the exposure slider to +3 EV, and the image didn't break. The highlight rolloff looked smooth!

I was very happy. I shipped RapidRAW v1.4.4, wrote up a release post, and excitedly shared it on the hardcore photography open-source forum, pixls.us. In my sample images, there was a train with a British Rail logo. It looked a bit yellowish-orange, but I thought, “Well, that’s just how AgX handles extreme highlights. It preserves energy.”

The Community Steps In

It took exactly one day for the illusion to shatter.

A user commented:

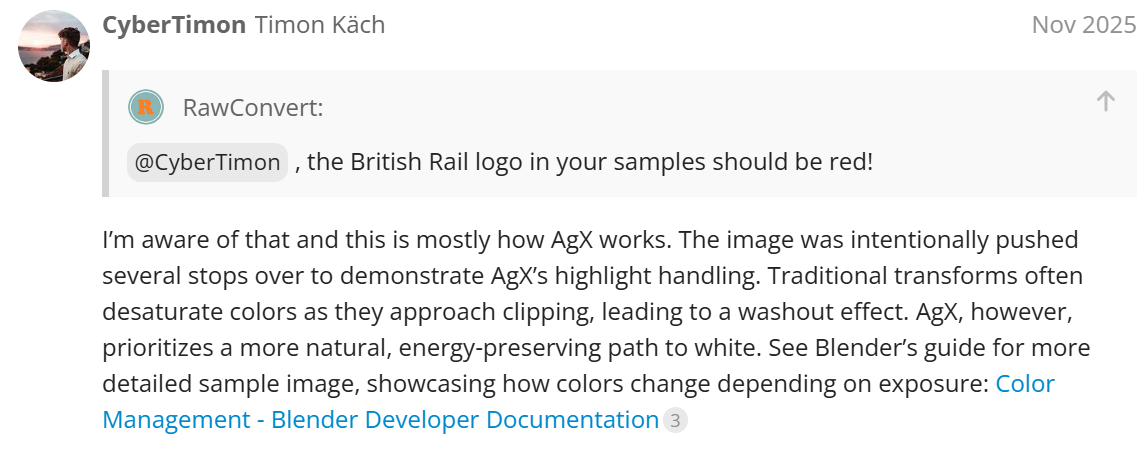

I confidently replied that this was just AgX doing its job... preventing the red channel from clipping and shifting the hue naturally.

Then, kofa, a prominent contributor and color science expert, stepped in with the receipts. He looked at my open-source shader code and asked: "Maybe the matrix is wrong? Where do these values come from? Are these row- or column-major matrices?" He then dropped the actual math from the Blender repositories to compare.

I looked at my code.

The AI hadn't just made a minor rounding error. It had confidently hallucinated the color matrices. It confused Rec.2020 primaries with sRGB primaries, mixed up row-major and column-major matrix layouts, and completely botched the derivation of the color space. Because I didn't actually understand the math, I hadn't verified the numbers. I was just vibecoding.

Doing the Math

I realized I couldn’t treat color science as a black box. If I wanted to build a professional-grade RAW editor, I had to actually understand what the pixels were doing.

I spent the next day deep-diving into the pixls.us thread, reading through AgX posts, and studying the logic darktable uses to implement AgX. I threw out the AI's "magic numbers" entirely.

Instead of hardcoding a mysterious const AGX_INPUT_MATRIX, I wrote a proper Rust function using the glam math library to derive the exact matrices from scratch on the CPU, before passing them to the GPU.

I implemented the actual math: converting the sRGB primaries to XYZ space, establishing the base Rec.2020 profile, and applying the exact primary rotations and inset/outset scales that give AgX its signature look.

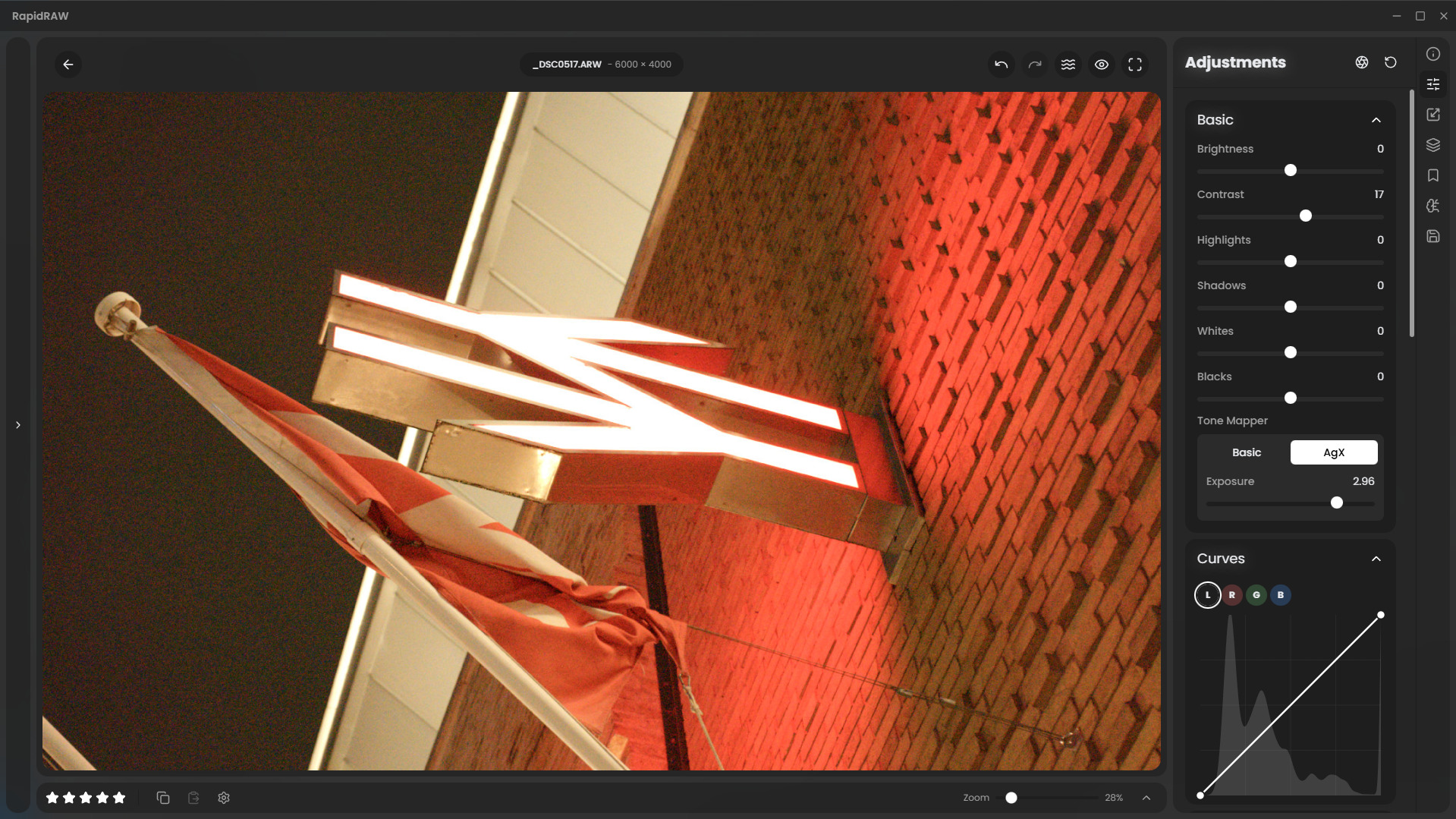

Once I mathematically calculated the pipe_to_rendering and rendering_to_pipe matrices properly, I fired up the app and pushed the exposure on the train image again.

The British Rail logo wasn't yellow anymore. It was a rich, beautiful, energy-preserving red.

What I've learned from this

AI is an incredible tool. It allows me to build a GPU-accelerated RAW editor. But AI will also look you dead in the eye and confidently lie to you about linear algebra.

Vibecoding is great for UI or writing boilerplate, but it falls apart the second you touch precision math or domain-specific science. You have to put in the work to understand the underlying concepts, or your software will inevitably break in ways you can't debug.

More importantly, this whole experience was a beautiful reminder of why open-source is amazing. I didn't get ridiculed for making a mistake; I got incredibly detailed, patient explanations from experts who just wanted to see a cool project succeed.

A massive thank you to kofa and the rest of the pixls.us community. RapidRAW is undeniably better today because of you. And next time I implement a color transform, I’ll be checking my matrices twice ;).

Back to all posts

RapidRAW

RapidRAW